This is an updated version of my previous article NixOS + Docker with MacVLAN (IPv4) – which addresses ARP Flux which causing networking problems. Skip to the bottom summary if you want to just read the conclusion.

Ever since, but very intermittently, my new server build out – I had been having issues with my macbook (OSX) randomly losing connection to the server, but only for a brief time. I noticed mostly when using mosh and it would indicate that I’d lost connection, but self hosted web UIs like Grafana also gave me problems.

Ping would also fail, but then it would start to work after a minute or so. What was weird is that while I could not ping my new server (192.168.1.79) I could have a stable mosh connection to another box (192.168.1.140), which could see the new server (192.168.1.79) just fine. This made it seem very much like it was something wrong with OSX. I was guilty of mostly ignoring this because it did impact me, but not frequently — it was still very annoying.

This is also an exploration of using Google Gemini, which you can use from an incognito tab with no login required. It didn’t immediately get me to the right answer, but we eventually got there.

The first iteration didn’t really get me very far, only a suggestion to check the route on my OSX machine when the problem was happening. This meant I had to wait around for it to break – and catch it in the act.

|

|

% route -n get myserver.lan route to: 192.168.1.79 destination: 192.168.1.79 interface: en0 flags: <UP,HOST,DONE,LLINFO,WASCLONED,IFSCOPE,IFREF> recvpipe sendpipe ssthresh rtt,msec rttvar hopcount mtu expire 0 0 0 0 0 0 1500 1167 |

Unfortunately the output from this didn’t change with broken networking vs. working. At this point I added the detail that this networking problem seemed to happen most often after waking the OSX machine from sleep. Gemini was then able to reason:

In your route output, the flag LLINFO stands for Link Layer Information. This means macOS has a cached MAC address for 192.168.1.79.

When a Mac sleeps, it often hands off its networking responsibilities to a “Sleep Proxy” (like an Apple TV or HomePod) or simply drops its ARP table. When it wakes up, it might be trying to use a stale hardware address, or the Linux machine (myserver.lan) might not be responding to the Mac’s “Who has 192.168.1.79?” request fast enough.

The next suggestion was to check the arp information when networking was broken.

|

|

arp -a | grep 192.168.1.79 |

- If it says (incomplete), the Mac is sending requests but getting no answer.

- If it shows a MAC address, compare it to the actual MAC address of the Linux box. If they match, the physical path is clear but the packets are being dropped higher up.

This got me looking at my WatchYourLan deployment, this is a networking monitoring tool that I use to alert me to new devices (based on MAC addresses) on my network.

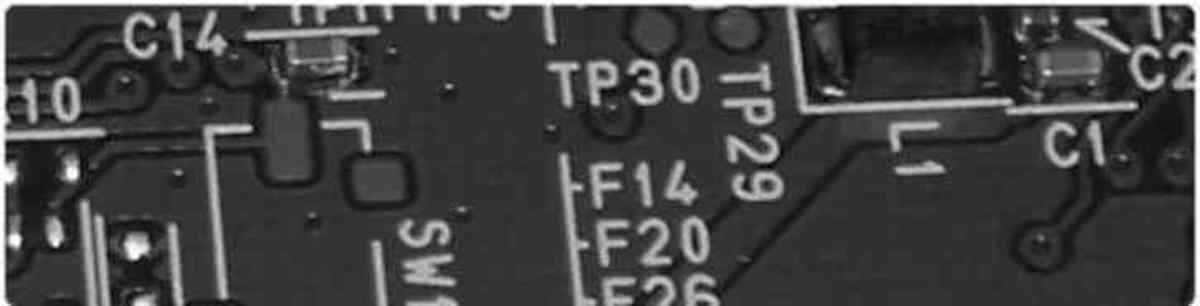

To my surprise – I found another MAC 82:d4:9a:8f:06:40 for myserver.lan, one that I didn’t expect to see. Gemini called this a smoking gun, as it is a Locally Administered Address (LAA) – also known as a generated MAC. Gemini also concludes that this may be a Docker issue, which somewhat confirms what I was thinking. The advice from Gemini is getting more specific now, but I also find that in general this is where AI generated output can go off the rails so caution is required.

The Pattern: You have a “Bridge” problem

Seeing four different randomized MACs for a single IP (192.168.1.79) on a wired Linux machine is a classic symptom of Docker Userland Proxy or Bridge-utils misconfiguration.

When you run containers or virtual machines in “Bridge Mode,” the Linux kernel creates a virtual switch (br0, docker0, etc.).

The physical NIC (eth0) has its own hardware MAC.

The Bridge (br0) often generates its own MAC (like one of these A6 or 52 ones).

Each container gets a virtual interface (veth) with a randomized MAC.

I then remember to add the detail that this Linux machine is a NixOS installation, and Gemini comes back with

NixOS with a complex Docker setup is the “perfect storm” for this. NixOS tends to be very explicit about interface creation, and Docker’s default bridge behavior often clashes with how NixOS manages its own networking stack.

It suggests a “ghost hunter” command to identify bad MACs

|

|

ip link show | grep -iE '..:[0-9a-f][26ae]:' -B 1 |

If these “ghost” MACs are responding to ARP requests on your physical LAN (a phenomenon called ARP Flux), it’s usually because the Linux kernel is being too “helpful” by answering ARP requests for an IP on any interface, even if the request came in on a different one.

It also suggests a change to my configuration.nix file, which in retrospect was pretty close to the final solution – but not quite. At this point I’m pretty impressed with Gemini being able to help with NixOS specifics. It also asks the question

Does your NixOS config happen to have any bridge interfaces (networking.bridges) or macvlan setups defined manually?

Why, yes – yes I do I reply. Gemini then fires back:

This macvlan-shim is the “smoking gun” for your macOS networking issues.

Why this breaks your Mac’s connection

By default, Linux is very “promiscuous” with ARP. When your Mac sends an ARP request asking “Who is 192.168.1.79?”, the Linux kernel sees that request on enp3s0. Because the macvlan-shim is bridged to that same physical card, the kernel might answer the Mac using the macvlan’s generated MAC address instead of the physical NIC’s hardware address.

This is known as ARP Flux. Your Mac gets confused because it sees multiple MAC addresses (the “ghosts” we found earlier) all claiming to be the same IP or living on the same segment. When the Mac wakes from sleep, it’s a race: if the “shim” MAC wins the ARP race, the Mac sends traffic to a virtual interface that isn’t configured to handle the host’s primary traffic.

It sure likes the term “smoking gun”, but it feels like we’re close to identifying the real root problem in my setup. I had previously done some changes to the way I deployed the various docker containers on my macvlan network to set static MAC addresses, but had not yet figured out how to make the shim IP have a static MAC address. The evidence I have and the advice from Gemini are starting to align. Odd that I got to this configuration from a previous setup using Ubuntu which didn’t have an issue, or maybe it was just luck due to timing.

I asked a lot of clarifying questions about the solutions it was proposing, which helped iterate towards both my more complete understanding of the solution – but it also helped generate some options. A minimalist solution to this was suggested, but felt like a lot of ‘trust in the force’:

|

|

boot.kernel.sysctl = { # This is the "Nuclear Option" that covers everything at once "net.ipv4.conf.all.arp_ignore" = 1; "net.ipv4.conf.all.arp_announce" = 2; }; |

If you added this to my previous article on NixOS + Docker + MacVLAN you’d probably be fine. However, here is the more complete solution I ended up using:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 |

{ networking.macvlans."myNewNet-shim" = { mode = "bridge"; interface = "enp3s0"; }; networking.interfaces."myNewNet-shim" = { macAddress = "06:00:00:00:00:67"; ipv4.addresses = [{ address = "192.168.1.67"; prefixLength = 32; }]; ipv4.routes = [{ address = "192.168.1.64"; prefixLength = 30; }]; }; # We need to prevent Arp Flux and silence any ghost replies # Ensure the kernel only answers for 192.168.1.79 on the physical # card and for containers on the virtual IPs boot.kernel.sysctl = { # Only reply if the target IP is on the specific # interface receiving the request "net.ipv4.conf.all.arp_ignore" = 1; # Required, only reply # to ARP for your interface "net.ipv4.conf.enp5s0.arp_ignore" = 1; # Redundant, covered by # above all setting "net.ipv4.conf.macvlan-shim.arp_ignore" = 1; # Recommended, keeps # shim silent # Ensure ARP announcements use the primary IP of the interface "net.ipv4.conf.all.arp_announce" = 2; # Required, always use # the right interface "net.ipv4.conf.enp5s0.arp_announce" = 2; # Redundant, covered by # above all setting "net.ipv4.conf.macvlan-shim.arp_announce" = 2; # Recommended, do not # leak MACs }; } |

Again, if you compare with the original article we can see that I’ve added a specific MAC address for the shim, and used a naming convention to make the last digit match the IP address. The big change is the boot.kernel.sysctl which is very similar to the minimal setup above.

I also use the new MAC address numbering scheme for each of my macvlan containers – assigning them 06:00:00:00:00:XX where XX is the IP. Very handy to see they are the right assignment.

Now this had some interesting side-effects. The shim IP continued to offer up a stale MAC generated MAC address. I was able to fix this by forcing a recreate (but I suspect a reboot may have solved the problem).

|

|

sudo ip link set myNewNet-shim down sudo ip link delete myNewNet-shim sudo ip link add myNewNet-shim link enp3s0 type macvlan mode bridge sudo ip link set myNewNet-shim address 06:00:00:00:00:67 sudo ip link set myNewNet-shim up |

A few other things also broke due to this more restrictive ARP control. Previously a container living on the macvlan network (like my nginx for local web services – on IP 192.168.1.65) could see my server (192.168.1.79). The myNewNet-shim provided visibility from the host to the nginx (192.168.1.65).

In this new no ARP Flux world, I have to use the shim address (192.168.1.67) in order to connect to my server (192.168.1.79) – so everywhere I reference x.x.x.79 I now needed to use x.x.x.67.

[Edit: after running like this for a bit, I realized I was having the same ARP Flux issue – this section is bad advice Gemini gave me]

I’ve realized that this makes my wireguard setup a bit more annoying because if I use wireguard to connect home from remote, and I want to connect to my server I need to use the shim address instead of the local DNS name that maps to the real address. This was too big a trade off so I went back to Gemini and had a long discussion (with a lot of dead ends) to arrive at a solution that adds back the visibility of the host (192.168.1.79) from the macvlan containers (say 192.168.1.64 for example).

|

|

# Create a "stealth" host IP on the macvlan bridge. # This allows containers/WireGuard to talk to the host at 192.168.1.79. # 'scope host' is critical: it prevents ARP Flux by ensuring the host # never advertises this IP to the physical network (enp3s0). systemd.services."network-addresses-myNewNet-shim" = { # We use the absolute path to 'ip' to avoid needing to set the 'path' variable postStart = "${pkgs.iproute2}/bin/ip addr add 192.168.1.79/32 dev myNewNet-shim scope host || true"; }; |

Now using wireguard, I can see the full local network – host included.

[Edit: the above fix didn’t work, I still had ARP Flux problems. The simplest solution was simply to inject an ip route add 192.168.1.79 via 192.168.1.55 into each of the macvlan containers. Since many of my containers are linuxserver.io based, I can use the custom script pattern they support]

Less ARP chaos is a good thing. Gemini did suggest that instead of declaring the route for the shim with a CIDR block that includes the shim address, I could be even more specific and simply have a route per IP address:

|

|

ipv4.routes = [ # 1. Route the WireGuard container specifically { address = "192.168.1.64"; prefixLength = 32; } # 2. Route your other containers specifically { address = "192.168.1.65"; prefixLength = 32; } { address = "192.168.1.66"; prefixLength = 32; } # 3. Keep your WireGuard tunnel route { address = "10.13.13.0"; prefixLength = 24; via = "192.168.1.64"; } ]; |

The key difference here is using /32 instead of /30. Being specific avoids the Linux Kernel from having to figure out what to do with the shim address (192.168.1.67) but it seems to do the right thing so I’ve gone with the simpler declaration. I’ve also got a special route in there for wireguard addresses so my host can see the wireguard clients directly.

Verifying the fix can be done on the OSX machine

We want to review all of the MAC addresses to make sure we have the expected ones that follow the pattern 06:00:00:00:00:xx. If all goes well, this is the end of the ghosting problem (ARP Flux) that will cause the annoying interruption in networking from my OSX machine to the server.

In summary – the full docker macvlan setup on NixOS – this is basically a brief re-telling of the original post, with all of the updates above merged in.

First enable docker support on NixOS. You need a single line added to your /etc/nixos/configuration.nix

|

|

virtualisation.docker.enable = true; |

Create your docker macvlan network.

|

|

$ docker network create -d macvlan -o parent=enp3s0 \ --subnet 192.168.1.0/24 \ --gateway 192.168.1.1 \ --ip-range 192.168.1.64/30 \ --aux-address 'host=192.168.1.67' \ myNewNet |

Docker will persist this network configuration across reboots.

Now we need to modify /etc/nixos/configuration.nix to fix routing to/from our macvlan network IPs from the host – and avoid causing APR Flux.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 |

networking.macvlans."myNewNet-shim" = { mode = "bridge"; interface = "enp3s0"; }; networking.interfaces."myNewNet-shim" = { macAddress = "06:00:00:00:00:67"; ipv4.addresses = [{ address = "192.168.1.67"; prefixLength = 32; }]; ipv4.routes = [{ address = "192.168.1.64"; prefixLength = 30; }]; }; # ARP Flux Protection (Defense in Depth) # While 'scope host' on the macvlan-shim prevents the host from # advertising 192.168.1.79 to the physical network, these sysctl # settings ensure the kernel's ARP behavior is strictly tied # to the specific interface receiving the request. boot.kernel.sysctl = { # Only reply if the target IP is on the specific # interface receiving the request "net.ipv4.conf.all.arp_ignore" = 1; # Required, only reply # to ARP for your interface "net.ipv4.conf.enp5s0.arp_ignore" = 1; # Redundant, covered by # above all setting "net.ipv4.conf.macvlan-shim.arp_ignore" = 1; # Recommended, keeps # shim silent # Ensure ARP announcements use the primary IP of the interface "net.ipv4.conf.all.arp_announce" = 2; # Required, always use # the right interface "net.ipv4.conf.enp5s0.arp_announce" = 2; # Redundant, covered by # above all setting "net.ipv4.conf.macvlan-shim.arp_announce" = 2; # Recommended, do not # leak MACs }; |

This was a bit of a journey, but if you were just looking for a clean way to get macvlan networks working with NixOS and Docker hopefully it is presented in a way that is straight forward and you can follow. Also, we touched on using AI to help us explore solutions – and I do encourage you to use it as a tool vs. a magic eight ball. Along the way many wrong answers were presented, but by asking for more details and ways to test the assumptions, and changes – I was able to learn more about the solution and come to what I think was a good solution.

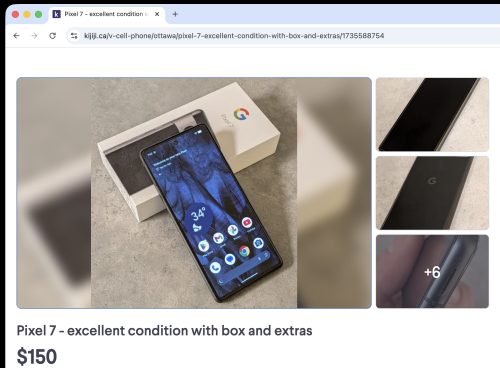

![]() I didn’t need a new phone, the Pixel 7 I’d been using for almost 2 years was doing everything I needed. The battery life was good, it still felt fast, and the photos were great.

I didn’t need a new phone, the Pixel 7 I’d been using for almost 2 years was doing everything I needed. The battery life was good, it still felt fast, and the photos were great.