It was probably 2 years ago when I started talking about the terabyte club. This was before 1TB drives were common (and cheap), the sweet spot for price/capacity was around 250Gb – but four of those would net you 1TB for under $500. Considering I spent $2000 on my first 1Gb SCSI drive back in 1990 – being able to get 1000X the storage for a lot less, still makes me sit back and think wow.

I failed to properly join the terabyte club 2 years ago, I suspect that sometime in the last year or so the total sum capacity of my machines at home topped 1TB of storage – but just recently my purchase of a WD Caviar Green 1TB drive ensured that I was “in the club”. (It also lives in my webserver, so its effectively “online”)

I had my eye on the Caviar Green series ever since I saw the Tom’s Hardware review that showed that the Green drives had real savings in power consumption. While their power use under load is closer to typical – for the most part my server is idle (but always on). At the larger capacities, I just don’t trust the even more power friendly laptop drives.

I watched the price canada page for the 1TB model over a couple of weeks, and once the price fell below $100 I knew it was inevitable I’d buy one. Initially I was going to get it at PCCyber for $87.99 (the price has since dropped), but karma dictated that they were out of stock the day I was going to buy one. I then decided to head off to Canada Computers where they had it for $84.99. Wouldn’t you know it, when I got back from the store with my purchase in hand there was a NewEgg.ca deal for $79.99 (with free shipping). It seems the best local price currently is $77.77.

The D945GCLF2D (dual core Atom system) that is my server has only 2 SATA ports, both which are full. So I used the PCI to 4 port SATA card I had from a previous machine to host the new drive. Sadly, after booting I noticed the following message(s) in the log:

Oct 24 14:47:03 lowtek kernel: [ 246.357277] BUG: soft lockup – CPU#0 stuck for 11s! [kacpid:62]

Previous to this new hardware addition, my server had been up for 172 days – so it has been very reliable. The other symptom of this was the kacpid process eating 100% CPU. It turns out this is a known bug – and there is a work-around. Strange how it was the addition of the PCI card which triggered the problem for me. Simply disabling the System Fan Control in the BIOS remedied the problem, and the system seems solid once again.

When I had initially setup the server machine, I had added a CDRom drive. I’ve since learned that CDRom drives are pretty power hungry – so I removed it, and added my new 1TB volume. Looking at my UPS statistics both before and after show the same 9% load capacity, not bad considering the increase in storage capacity.

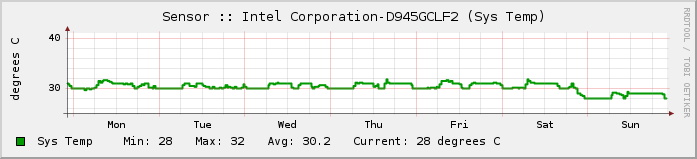

Looking at sensor data we see the following:

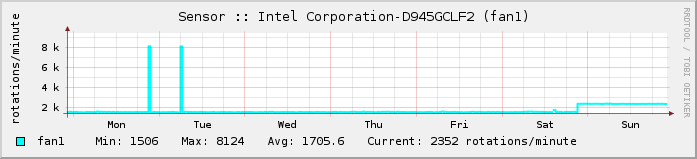

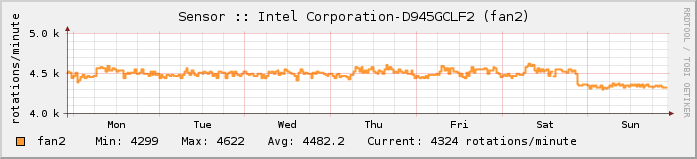

The overall system temperature has gone down. To explain this we look at the fan data:

As expected, the BIOS changes have influenced fan speeds. One has gone up, the other down(?). Fan1 is a large 92mm casefan that blows down onto the mainboard, fan2 is a 40mm chipset cooler on mainboard.

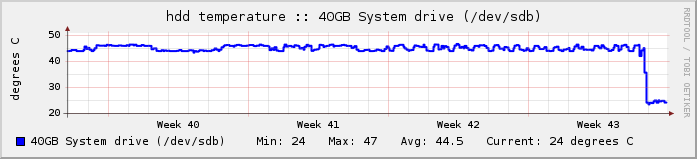

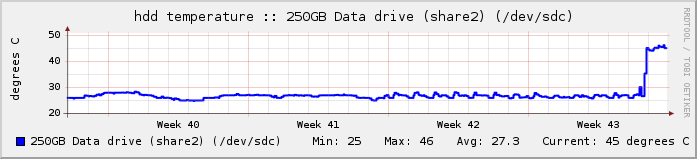

The last bit of data is hard drive temperatures:

So what happened here? Simple, the addition of the PCI->SATA card has caused the numbering of the drives to change. Thus while a quick glance at the results may be cause for alarm, careful examination of all of the logs shows that the drives are running at exactly the same temperatures as they were before.

In summary – this drive was inexpensive, it runs cool (24C), quiet and most importantly it is power efficient. In terms of reliability – we’ll have to see in a couple of years.

Sorry, not related to the post but just wanted to pass on a “Wickle” to Quixotic. How’s that for late 80’s?

Dave – You didn’t mention your C64 BBS with 20MB hard drive – and how much that cost 🙂

Actually, it was 10 Meg. That was a sweet setup back then with the “SIMSTIM” switch to swap back and forth between the BBS on the C64 and my C128 for play.

I think I paid around $250 for that Xetec Lt. Kernal HD back then. Ouch.

Plus I had that IEEE box with the equivalent of 3 X 1541’s and the 1581.

Looking back I kinda wish I still had all that instead of selling it off to get the Amiga 500. I’d probably still fire it up and watch all those old demo’s you and Ryan made.

Tell him I say…”DRUG RAID AT 4AM” *POUNCE*